all-flash array (AFA)

What is an all-flash array?

An all-flash array (AFA), also known as a solid-state storage disk system or a solid-state array, is an external storage array that supports only flash media for persistent storage. In an AFA, flash memory is used in place of the spinning HDDs that traditionally are associated with networked storage systems.

Storage vendors typically sell all-flash arrays and hybrid arrays. The latter configuration combines a mix of SSDs and HDDs within the same chassis. A hybrid storage array enables a vendor to retrofit an existing disk system by replacing a portion of the fixed media with flash.

All-flash array design: Retrofit or purpose-built

Other vendors sell purpose-built systems natively designed from the ground up to only support flash. An all-flash model also embeds a range of software-defined storage features to manage data.

A defining characteristic of an AFA is the inclusion of native software services that enable users to perform data management and data protection directly on the array hardware. This is different from server-side flash installed on a standard x86 server. Inserting flash storage into a server is much cheaper than buying an all-flash array, but it also requires the purchase and installation of third-party management software to supply the needed data services.

This article is part of

Flash memory guide to architecture, types and products

Leading all-flash vendors have written algorithms for array-based services for data management, including clones, data compression and deduplication -- either an inline or post-process operation -- snapshots, replication and thin provisioning.

As with its disk-based counterpart, an all-flash array provides shared storage in a SAN or NAS environment.

How an all-flash array differs from disk

Flash memory, which has no moving parts, is a type of nonvolatile memory (NVM) that can be erased and reprogrammed in units of memory called blocks. It is a variation of erasable programmable read-only memory that got its name because the memory blocks can be erased with a single action, or flash. A flash array can transfer data to and from SSDs much faster than electromechanical disk drives.

The advantage of an all-flash array, relative to disk-based storage, is full bandwidth performance and lower latency when business applications make a query to read the data. The flash memory in an AFA typically comes in the form of SSDs, which are similar in design to an integrated circuit.

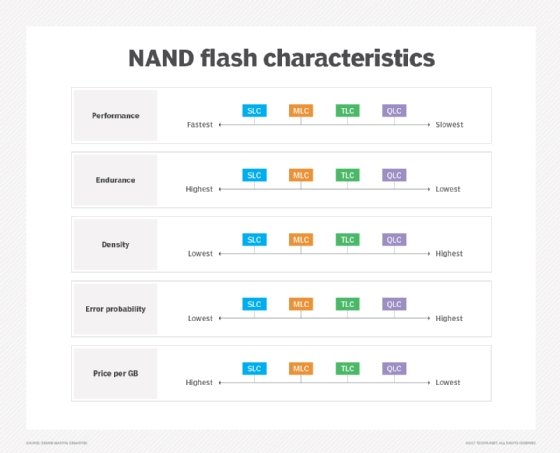

Flash is more expensive than spinning disk, but the development of multi-level cell (MLC) flash, triple-level cell (TLC) flash, NAND flash and 3D NAND flash has lowered the cost of ownership. These technologies enable greater flash density without the cost involved in shrinking NAND cells.

MLC flash is slower and less durable than single-level cell (SLC) flash, but companies have developed software that improves its wear level to make MLC acceptable for enterprise applications. SLC flash remains the choice for applications with the highest I/O requirements and is also widely used by public cloud providers. However, TLC flash reduces the cost more than MLC, though it also comes with performance and durability tradeoffs that can be mitigated with software.

All-flash array vs. hybrid array

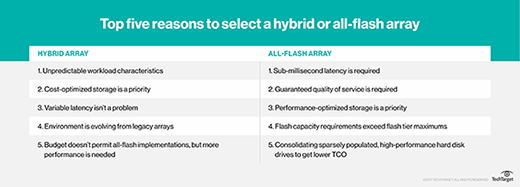

All-flash arrays are storage arrays containing only flash disks, whereas hybrid arrays contain a mixture of flash disks (SSDs) and traditional HDDs. The HDDs are commonly referred to as the capacity tier, whereas the SSDs are often called the performance tier.

Hybrid arrays are commonly used in two ways. The first option is for a storage admin to simply create a virtual disk on top of either the performance pool or the capacity pool. This gives the admin the flexibility to choose the type of storage and effective capacity that is most suitable for the workload. This can be especially useful in hybrid cloud environments because hybrid clouds often host a mixture of workloads with varying storage requirements.

The other option is to use the performance tier as a cache. Hot data -- i.e., data that has recently been created or accessed -- is automatically moved to the performance tier, so anyone accessing that data will experience SSD-level performance. As the data cools off -- i.e., is accessed less frequently -- it is automatically moved to the capacity tier to make room in the performance tier for new data. The advantage to using this approach is that it provides a way for organizations to reach a level of storage performance that is somewhat comparable to that of an all-flash array but at a significantly lower cost. Additionally, hybrid arrays enable organizations to achieve a higher overall storage capacity than what might be possible with an all-flash array.

Considerations for buying an all-flash array

Deciding to buy an AFA involves more than simple comparisons of vendor products. Factors that must be considered prior to purchasing an AFA include the following:

- Application performance. An all-flash array that delivers massive performance increases to a specific set of applications may not provide equivalent benefits to other workloads. For example, running virtualized applications in flash with inline data deduplication and compression tends to be more cost-effective than flash that supports streaming media in which unique files are not easily compressed.

- Read performance. An all-SSD system will produce smaller variations than that of an HDD array in maximum, minimum and average latencies. This makes flash a good fit for most read-intensive applications.

- Write performance. Write-intensive workloads require a special algorithm to collect all the writes on the same block of the SSD, thus ensuring the software always writes multiple changes to the same block.

- Endurance. Garbage collection can present a similar issue with SSDs. A flash cell can only withstand a limited number of writes, so wear leveling can be used to increase flash endurance. Most vendors design their all-flash systems to minimize the impact of garbage collection and wear leveling, though users with write-intensive workloads may wish to independently test a vendor's array to determine the best configuration.

- Cost. Despite paying a higher upfront price for the system, users who buy an AFA may see the cost of storage decline over time. This is tied to an all-flash array's increased CPU utilization, which means an organization will need to buy fewer application servers.

- Data center impact. The physical size of an AFA is smaller than that of a disk array, which lowers the rack count. Having fewer racks in a system also reduces the heat generated and the cooling power consumed in the data center.

All-flash array: An evolving market

Flash was first introduced as a handful of SSDs in otherwise all-HDD systems, with the purpose to create a small flash tier to accelerate a few critical applications. Thus, the hybrid flash array was born.

The next phase of evolution arrived with the advent of software that enabled an SSD to serve as a front-end cache for disk storage, extending the benefit of faster performance across all the applications running on the array.

The now-defunct vendor Fusion-io was an early pioneer of fast flash. Launched in 2005, Fusion-io sold Peripheral Component Interface Express (PCIe) cards packed with flash chips. Inserting the PCIe flash cards in server slots enabled a data center to boost the performance of traditional server hardware. In 2014 Fusion-io was acquired by SanDisk, which itself was subsequently acquired in 2016 by Western Digital.

Also breaking ground early was Violin, whose systems -- designed with custom-built silicon -- gained customers quickly, fueling its rise in public markets in 2013. By 2017, Violin was surpassed by all-flash competitors whose arrays integrated sophisticated software data services. After filing for bankruptcy, the vendor was relaunched by private investors as Violin Systems in 2018, with a focus on selling all-flash storage to managed service providers. Holding company StorCentric acquired Violin in 2020, marking its third ownership change in three years.

All-flash array vendors such as Pure Storage and XtremIO -- now part of Dell -- were among the earliest to incorporate inline compression and data deduplication, which most other vendors now include as a standard feature. Adding deduplication helped give AFAs the opportunity for price parity with storage based on cheaper rotating media.

All-flash array vendors and products

Leading all-flash array products include the following:

- DDN IntelliFlash N-Series.

- Dell Isilon NAS.

- Dell PowerStore.

- Dell PowerVault.

- Fujitsu Eternus AF.

- HPE Alletra.

- HPE Nimble Storage AF Series.

- HPE XP8.

- Hitachi Vantara Virtual Storage Platform.

- Huawei OceanStor Dorado.

- IBM FlashSystem.

- Infinidat InfiniBox SSA.

- Lenovo ThinkSystem DE Series.

- NetApp All Flash FAS.

- NetApp AFF A-Series.

- NetApp ASA series SAN arrays.

- Pure Storage FlashArray.

- Pure FlashBlade.

- Qumulo C Series NAS arrays.

Below are vendors that have changed gears or exited the all-flash business:

- Kaminario, renamed Silk in 2020, transitioned from commodity-based AFA products to focus its hardware stack for scalable block storage in the cloud.

- Nimbus Data exited the all-flash array market to focus on its ExaFlash drive family, including a 100 TB SSD aimed at hosting providers.

- Tegile Systems was acquired by Western Digital in 2017. Tegile arrays subsequently became part of Western Digital's IntelliFlash system, now owned by DataDirect Networks.

- Violin Systems, now owned by StorCentric, was one of the first AFA vendors, but it quickly fell behind other vendors as it struggled to deliver flash-optimized software management.

Impact on hybrid arrays use cases

Falling flash prices, data growth and integrated data services have increased the appeal of all-flash arrays for many enterprises. This has led to industry speculation that all-flash storage can supplant hybrid arrays. Nevertheless, there are good reasons for customers to consider hybrid storage infrastructure.

HDDs offer predictable performance at a fairly low cost per gigabyte. The flip side is that HDDs use more power and are slower than flash, resulting in a high cost per IOPS. All-flash arrays have a lower cost per IOPS, are faster and consume less power, but they have a higher upfront acquisition price and per-gigabyte cost.

A hybrid flash array enables enterprises to strike a balance between cost and performance. Since a hybrid array supports high-capacity disk drives, it may offer greater total storage than an AFA, although newer SSDs are appearing on the market with multiple petabytes of storage capacity.

All-flash NVMe and NVMe over fabrics

All-flash arrays based on NVMe flash technologies represent the next phase of maturation. The NVMe host controller interface speeds data transfer by enabling an application to communicate directly with back-end storage.

The NVMe-oF transport mechanism enables a long-distance connection between host devices and NVMe storage devices.

NVMe is meant to be a faster alternative to the SCSI standard that transfers data between a host and a target device. NVMe provides the ability to create pools of high-performance shared storage across a network.

Development of the NVMe standard is under the auspices of NVM Express Inc., a nonprofit organization comprising more than 100 member technology companies.

The NVMe standard is widely considered to be the eventual successor to the Serial-Attached SCSI and Serial Advanced Technology Attachment protocols. NVMe form factors include add-in cards, U.2 2.5-inch and M.2 SSD devices.

NVMe over Fabrics, or NVMe-oF, is a set of protocol specifications that enables hosts to connect and communicate directly to storage. The resulting data transfer is accomplished through NVMe message commands using Ethernet, Fibre Channel or InfiniBand.

Presently, market-leading storage vendors are creating flash storage systems based on NVMe-oF. These systems integrate custom NVMe flash modules as a fabric in place of a bunch of NVMe SSDs.

Available NVMe-based products include the following:

- HPE Alletra 9000 4-way NVMe Storage Base.

- Pure Storage FlashArray//X.

- StorOne S1 Enterprise Storage Platform with NVMe-oF over TCP.

- Supermicro SuperStorage systems.

- Tintri's NVMe Flash N-Series.

A handful of startups have gained traction selling NVMe-oF storage systems, including Kioxia, Lightbits Labs and Vast Data.

A handful of NVMe-flash startups tried bringing all-flash array products to market, but all either have been acquired or ceased operations. The list includes Apeiron Data Systems, E8 Storage, Pavilion Data Systems and Vexata.

All-flash storage arrays in hyperconverged infrastructure

Hyperconverged infrastructure (HCI) systems combine computing, networking, storage and virtualization resources as an integrated appliance. Most hyperconvergence products are designed to use disk as front-end storage, relying on a moderate flash cache layer to accelerate applications or to use as cold storage.

The higher cost of flash imposes a price premium on an all-flash HCI system, making them less common than disk-based HCI platforms.

However, all-flash HCI nodes are an option from various vendors:

- Cisco HyperFlex HX220.

- Dell VxRail.

- HPE SimpliVity 380.

- Hitachi Vantara Unified Compute Platform HC.

- IBM Hyperconverged Systems by Nutanix, running the Nutanix Acropolis hypervisor.

- IBM Storage Fusion HCI system.

- Nutanix NX-9000.

- StarWind HCI Appliance.

Some storage array vendors struggled to gain a foothold in the HCI market. NetApp said it will begin phasing out its NetApp HCI product, which used all-flash SolidFire arrays as storage.

Storage class memory in AFAs

One of the most significant developments with respect to all-flash arrays is the emergence of quad-level cell NAND flash drives to augment performance. Major storage vendors have rolled out QLC-enabled versions of their AFAs for cloud and other non-mission-critical workloads. NetApp has launched the All-Flash FAS C-Series storage portfolio, which adds QLC SSDs to help drive down the cost of capacity.