5 ways hybrid cloud storage is changing SAN management

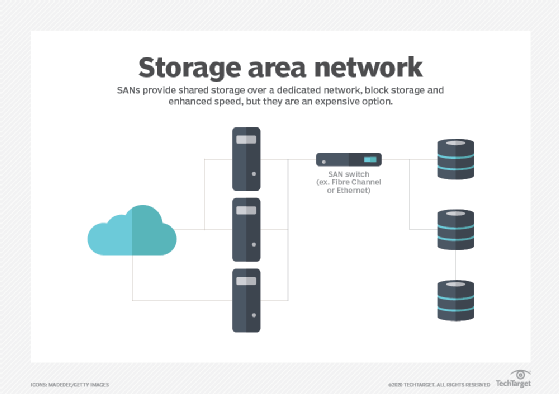

Increasing reliance on cloud storage is changing the way storage area networks are managed and the challenges that SAN admins face in responding to business requirements.

Data has grown at an unprecedented pace for years, and storage management has had to change to keep up. Industry observers have repeatedly predicted that storage area networks would become obsolete. But it hasn't happened, and the fundamental architecture has remained relevant in a changing landscape.

As data increasingly moves to the cloud, SAN management has adapted to new technology and approaches. More attention is being paid to security, training and vendor partnerships, in particular. Those changes have had significant implications for SAN management and the job of the SAN administrator.

Traditional SAN management

Traditional management of a local storage area network requires a great deal of attention to detail, because every aspect of the design and infrastructure is locally supported and documented. Reporting on everything is much more important with a SAN. Admins must pay close attention to LUNs and LUN management; targets; SAN hosts, including both driver and security updates; Fibre Channel (FC) switches; and host bus adapter ports.

SAN management functions are more detailed, including the configuration of FC switches, SAN zoning, log management and review, monitoring intervals, and alarms and notifications. Maintaining efficiency is also more complex, as admins must focus more on grouping and organizing LUNs, storage systems and SAN hosts for efficient management and monitoring.

The path to the hybrid storage model

Not long ago, many enterprises were talking about transitioning to cloud-only approaches to storage. However, organizations that tried that ran into issues, primarily in data security and cost management.

Public cloud storage costs have strained budgets, spurring enterprises toward the hybrid storage model. Several other factors are behind the hybrid trend, notably the need for rapid deployment, agility, scalability, staffing changes, lower capital expenditures and overall reliability. In my recent deployments, we couldn't migrate many servers to the cloud because of the cost and the time required to comply with the necessary regulatory requirements.

Despite these issues, the benefits of using the cloud to create new data-driven business value have outweighed the disadvantages for many enterprises. In particular, the scale and flexibility of the cloud makes it fast and easy to modify applications and resources.

Today, cloud storage remains important; however, the idea that everything will move to the cloud is no longer realistic. The landscape has shifted toward a hybrid cloud storage model.

The key to implementing a hybrid model is knowing your organization's environment well enough to effectively manage the hybrid combination. In particular, you'll want to know which servers and applications must be local to optimize performance, management and scalability, and which are flexible enough to go to the cloud.

The goal is to create a well-rounded environment that makes the most of cost benefits, performance, stability and security across both the on-site SAN and the cloud. The need to satisfy various data compliance requirements -- such as the Criminal Justice Information Services, GDPR and HIPAA -- can determine what stays on site and what goes to the cloud. Performance requirements are also important to consider, as database applications may not perform optimally in a client-server environment when database servers are hosted in the cloud.

SAN management and hybrid cloud storage

The hybrid cloud has significantly changed enterprise storage, making it more scalable, accessible and flexible. The hybrid approach has also changed the focus of SAN management. What follows is a look at five key areas where this is the case.

Cost controls

Increasing costs and ongoing cost-management challenges of cloud storage have led many businesses to the hybrid storage model.

With local SAN management, cost control is more about the management of hardware lifecycles, service contracts and capital expenses. Hardware support costs can significantly increase after five years. That's why lifecycle management and the proper planning of hardware replacements within five years is key to controlling costs.

Once organizations add in cloud resources, they must focus more on billing and managing costs, along with resource use, storage optimization, security and contract management. It's easy to allocate too many resources to a cloud device and drive up costs. Making sure that servers are properly sized and keeping track of monthly costs is critical. However, tracking costs and forecasting use can be a challenge. Periodic reviews of how resources are used and matching an organization's requirements to allocated resources are key to cost savings.

Cloud costs can be contained with attention to contract management details. Taking advantage of reserved instances -- discounts based on the customer's commitment to a certain level of use -- in AWS and Microsoft Azure deployments and Google Cloud's committed use discounts can reduce expenditures. Automation features can save money as well, both in resource use and employee time.

Security and data compliance

There are several key factors to managing security and data compliance in a hybrid environment. You must know your security goals and understand what each cloud and on-site provider offers, including what's offered by default and what will be an extra expense. With data compliance, it's also important to know where responsibilities lie and what each cloud provider, as well as the local IT team, can offer.

With the cloud, specific security measures are necessary. For instance, multifactor authentication is a must. Many vendors offer compelling options.

Resource management

Managing resources in a hybrid environment is important for cost savings. Both cloud and SAN management require a full understanding of the services and the tools being purchased for effective planning, development, deployment, operations, decommissioning, access management and monitoring of infrastructure resources.

Cloud services can reduce infrastructure manageability. For instance, direct access to server consoles and direct control over what is running on shared infrastructure can be limited. Organizations must consider what level of control they require and identify the tools and services needed.

A local SAN vs. hybrid storage models

Hybrid cloud storage offers organizations a level of scalability, accessibility and flexibility in application deployments that they don't get with a local SAN. The local model, on the other hand, offers more control over capital expenses; hybrid cloud deployments can quickly see costs spiral upward depending on resource utilization. A local model often means duplicating hardware at a recovery site and maintenance of multiple locations, while a hybrid model can offer quick and easy DR and data center redundancy for applications in the cloud.

Securing data is also different: A local SAN ensures full control over who has access to your data, while the hybrid model can mean giving up some control over the location of your data, and it relies on vendor security for preventing data breaches. A local model also provides more control over your environment at the expense of being able to respond quickly to changing business needs.

One way to deal with the challenges of a hybrid approach is to have a deep understanding of your organization's requirements. That information will help determine which model best fits for specific applications and deployments.

In local SAN deployments, vendors generally offer management tools. Some, such as NetApp's OnCommand Insight, can manage and report on many vendors' storage arrays. Third-party tools are also available.

On the cloud side, it's important to review and downsize underutilized resources, as well as turn off unused instances, using reserved instances when possible, shutting down servers during off-hours and using caching when possible with tools such as ElastiCache for AWS and Azure Cache for Redis.

Automation

A large amount of effort is required to create new resources, test them, identify when they're no longer needed and decommission unused resources both in the cloud and on site. Automation can improve all these tasks. It's especially useful for improving security and governance, workload management, testing and backup processes.

In the cloud, templates can be created that enable quick automated deployments and schedule automatic shutdowns and restarts of servers that don't require 24/7 uptime. In larger environments, automation can be used for tasks such as resource provisioning, configuration management and application deployment.

Automation does, however, require specialized skills and tools. In the cloud, it's worth looking at tools such as AWS CloudFormation, Google Cloud Deployment Manager, Microsoft Azure Automation and various third-party tools such as Chef, Puppet and Red Hat's Ansible Automation Platform. There are many options for automating on-site SAN management, such as Ansible, Broadcom CA Automation and Cisco Intelligent Automation for Cloud.

Infrastructure and virtualization

Many decisions must be made in the initial infrastructure deployment. This includes which virtualization technology will be used, load balancing, application infrastructure and framework, integration with local resources, and the management and application framework.

You'll want to assess how a cloud offering fits with your existing environment and workflows. If you're invested in Microsoft's software, you might consider Azure first. If you're heavily invested in Amazon or Google services, using their respective cloud offerings might ease integration.

With local SAN infrastructure, price vs. performance is a primary consideration, as there is much more flexibility when implementing a local infrastructure. Other key considerations include hybrid arrays vs. all-flash, data reduction, encryption, local network design and manageability, and reporting.