Storage

- Cover storyEverything you need to know about composable infrastructure

- InfographicIllustrated guide to VMware vSAN HCI configuration and features

- FeatureNavigate PII data protection and GDPR to meet privacy mandates

- ColumnImportant questions about DRaaS you're not asking your vendor

- ColumnWhy application intelligence is the linchpin of modern data centers

stock.adobe.com

Why application intelligence is the linchpin of modern data centers

AI-based application intelligence tools help IT cope with growing scale and complexity by automatically detecting workloads, analyzing requirements and making recommendations.

It is generally accepted that data is the fuel of the modern business. But who keeps that fuel pumping? The IT department's job is to ensure data and services not only remain available, but help line-of-business teams use the data they need to propel the business forward.

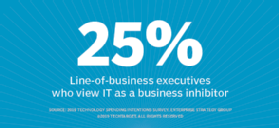

In March 2019, Enterprise Strategy Group conducted its 2019 Technology Spending Intentions Survey. The research found that a quarter of the line-of-business executives that responded view IT as a business inhibitor, more than four times the percentage of those who perceive IT as a competitive differentiator.

That's a problem, as modern digital businesses need IT to be a differentiator to succeed. After all, in this rising digital economy, it is how effectively businesses scrutinize their data that determines winners and losers.

Why is IT struggling to meet the requirements of modern businesses? Two big reasons are scale and complexity. IT environments are larger and more diverse than ever. Infrastructure is more dispersed in the data center and also spans public cloud services and the edge. Simultaneously, a host of new workloads are changing the way organizations manage data using advanced analytics, machine learning or newly developed container-based applications.

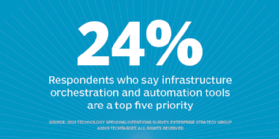

To help IT keep up, a wealth of infrastructure innovation is available now and on the near horizon, including NVMe, storage class memory and persistent memory. New architectures, such as hyper-converged infrastructure, also continue to see increased adoption. On the management side, automation tools are very popular. Among IT organizations that list data center modernization as a priority, 24% identify implementing IT infrastructure orchestration and automation tools as one of their top five priorities.

The combination of new infrastructure technologies and advanced automation sounds like, and should be, a perfect match for a set-it-and-forget-it IT environment. It isn't. A piece is still missing: application intelligence and insight.

Better application insight required

Nearly every CIO wants an IT infrastructure product tailored to his or her company's specific needs. The challenge is that few, if any, organizations truly understand their workload requirements. This lack of insight often becomes exposed when applications are shifted to public cloud services and the cost equation changes.

Even in cases in which a business has a good feel for a workload, it doesn't mean expectations meet reality. For example, file-based workloads with large files often suggest the workload is mostly large-block sequential. Metadata requests, however, could make it look more like small-block random. That still doesn't answer the question: What does the workload look like at the compute layer, network layer and storage layer?

Current workloads have required a certain level of infrastructure, and the new workloads IT will manage require an additional level of IOPS, bandwidth and capacity. IT traditionally delivered storage infrastructure, for example, in two forms -- SAN and NAS -- and you could essentially choose small, medium or large array sizes.

Today, IT has a much broader set of infrastructure options at its disposal, and it operates at a much higher infrastructure scale. While multiple architectures can serve the same applications, some will be more efficient than others. Using the more efficient option saves money.

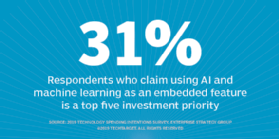

This is why IT needs more application intelligence and insight. Among surveyed organizations that listed data center modernization as a priority, 31% identified AI and machine learning as an embedded feature within IT products and solutions as a top five investment priority. This effort is too costly to do by hand; let the system do it.

Application intelligence in action

Multiple IT providers offer products that address the need for more application insight and intelligence. For example, Hewlett Packard Enterprise (HPE) offers InfoSight, a predictive analytics tool it acquired as part of the Nimble acquisition. Using telemetry data and machine learning, InfoSight can predict and identify potential issues, often resolving them before they affect the system. Since the acquisition, HPE has been aggressive with its innovation on InfoSight, expanding support to 3PAR, SimpliVity, Primera and the Apollo and ProLiant Server Systems.

Combined, that is a ton of telemetry data across a portfolio, and enterprises can use those insights for more than just routine infrastructure support. With ProLiant servers, for example, InfoSight also offers a recommendation engine that analyzes workload patterns and uses the data to help eliminate performance bottlenecks at the server level. The collection and analysis of telemetry data at both the storage and server level creates an interesting foundation of data that could deliver even greater benefits.

Other examples of predictive analytics products include Dell EMC CloudIQ, Hitachi Vantara Infrastructure Analytics Advisor, IBM Storage Insights, NetApp Active IQ and Pure Storage Pure1.

Essential, not a choice

Despite the prevalence of these tools -- and their benefits -- organizations often use them less frequently than you might expect. As IT scales, however, telemetry information and predictive insights will become even more vital.

The IT infrastructure space is too big and diverse for anyone to understand all the nuances. These AI-based application intelligence tools will play an essential role in improving IT automation efforts in the future.