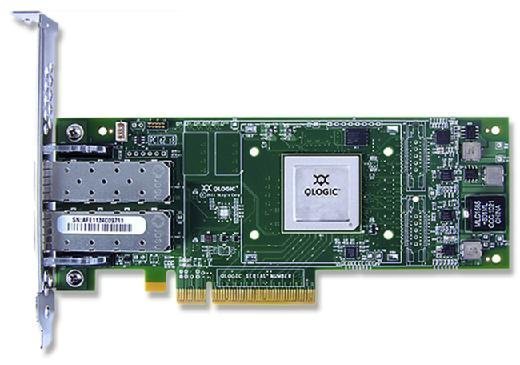

host bus adapter (HBA)

What is a host bus adapter (HBA)?

A host bus adapter (HBA) is a circuit board or integrated circuit adapter that connects a host system, such as a server, to a storage or network device. An HBA also provides input/output (I/O) processing to reduce the load on the host's microprocessor when storing and retrieving data, helping to improve the host's overall Performance.

An HBA and its associated disk subsystems are sometimes referred to as a disk channel, while the HBA itself is often called an HBA card. An HBA is typically characterized by the interconnect technology it supports, as well as its speed, port count and system interface. Most HBA cards plug in to the Peripheral Component Interconnect Express (PCIe) slots of the host computer, although they might come in other form factors, such as mezzanine cards for blade servers.

Although the term HBA can apply to a variety of interconnects, it is most commonly used with storage protocols, such as Fibre Channel (FC), Small Computer System Interface, Serial Advanced Technology Attachment (SATA) and Serial-Attached SCSI (SAS).

Fibre Channel host bus adapters

A Fibre Channel HBA enables connectivity and data transfer between devices in an FC-based storage area network (SAN). An FC HBA can connect a host server to a switch or storage device, connect multiple storage systems, or connect multiple servers when they're used as both application hosts and storage systems. SAN management software recognizes the HBA as the connection point.

Manufacturers of FC HBAs generally update their products in line with increases in the data rates of FC network technology. Fibre Channel products first became available in 1997. Since then, FC HBAs have grown steadily faster. When first introduced, FC HBAs delivered data rates of 1 gigabit per second (Gbps), but speeds have doubled with each new generation:

- 2 Gbps (Gen 2)

- 4 Gbps (Gen 3)

- 8 Gbps (Gen 4)

- 16 Gbps (Gen 5)

- 32 Gbps (Gen 6)

- 64 Gbps (Gen 7)

Gen 6 FC (32 Gbps) can be configured to deliver 128 Gbps by using parallel FC links to stripe four lanes of 32 Gbps FC, thus creating a single link of 128 Gbps. The FC roadmap indicates that single-lane FC speeds will reach 128 Gbps by 2024, so the four-lane solution might become obsolete or be applied to the faster speeds.

FC HBA manufacturers generally enhance products with additional features as they update to newer generations of FC technology. Improvements over the years have included data integrity features to prevent on-the-wire corruption in database environments. Improvements also include expanded support for virtualization to increase the density of virtual servers.

The market-dominant manufacturers of FC HBAs have been Marvell QLogic and Emulex. (Avago Technologies acquired Emulex and then Broadcom, taking on the Broadcom name in the process.) Additional FC HBA manufacturers include Atto Technology and Hewlett Packard Enterprise (HPE).

Distinguishing features of FC HBAs include performance, reliability, security, power capabilities, support for server virtualization and the availability of single-pane management software.

SCSI adapters/SCSI HBAs

A SCSI HBA is commonly associated with parallel SCSI, a once-popular data transfer technology that has largely been displaced by faster SAS. A SCSI HBA, or SCSI adapter, facilitates connectivity and data transfer between a host and a peripheral device or storage system as defined by the SCSI set of American National Standards Institute standards for I/O interconnects.

A plug-in HBA card typically initiates and sends service and task management requests to a target device, such as a storage drive or array, and receives responses from the target.

Parallel SCSI devices are connected to a shared bus. The maximum parallel SCSI speed is 320 megabytes per second. This is considered too slow to address the demands of modern computing systems, and performance often degrades as more devices are added to the shared bus. Parallel SCSI HBAs are viewed as outdated technology, and most manufacturers have discontinued producing them.

SAS and SATA HBAs

SAS was developed to address the limitations of traditional parallel SCSI and to deliver higher data transfer rates. Like parallel SCSI, SAS uses the SCSI command set, but the method of data transfer is different. SAS is a point-to-point serial data transport protocol.

A SAS HBA is a type of SCSI HBA that typically connects a host computer to a storage device, such as a hard disk drive, solid-state drive, just a bunch of disks device or tape drive. SAS HBAs are able to connect to single- or dual-port storage devices that are compatible with the SATA or SAS interface. In fact, many of today's SAS HBAs are sold as SAS/SATA devices.

SAS bandwidth started at 3 Gbps and advanced to 6 Gbps and then 12 Gbps. Each new generation of SAS also brought additional functionality, such as the ability to connect devices across longer cable distances. Differentiators between SAS HBA products include the supported SAS speed, data transfer rate, port count, PCIe bus type and power consumption.

Vendors such as Dell, HPE and IBM sell entry-level storage arrays that support a SAS SAN fabric and enable direct connections to servers equipped with SAS HBAs, eliminating the need for network switches. SAS HBAs are typically less expensive than FC HBAs, although an FC SAN offers better performance and more configuration options than a SAS environment.

SAS HBAs can also connect to SAS switches to enable connections between multiple servers and external storage, but the use of switched SAS is not as common as direct connections between the server and storage array.

Major SAS HBA manufacturers include Atto Technology, Broadcom (through Avago's acquisition of LSI), Microsemi (through its acquisition of PMC-Sierra) and HPE.

Other types of network adapters

As with HBAs, the following adapters can also connect a host system to storage or network devices:

- Network interface card (NIC). A NIC enables connectivity and data transfer between hosts and network devices over an Ethernet Alternate names include Ethernet adapter and Ethernet network adapter.

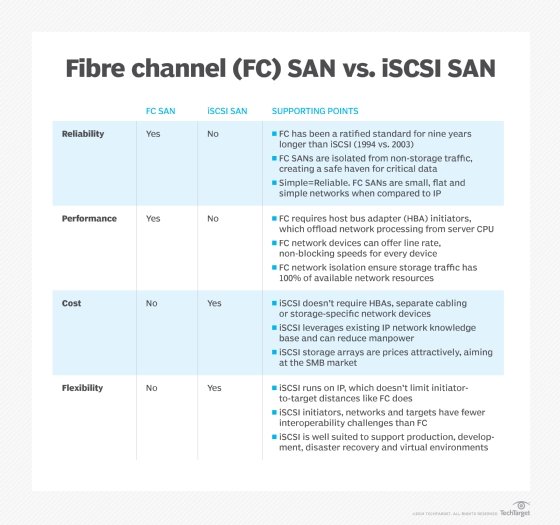

- Internet SCSI (iSCSI) adapter. An iSCSI adapter, also known as an iSCSI HBA or iSCSI NIC, provides SAN connectivity over TCP/IP and Ethernet network infrastructure and offloads the iSCSI and TCP/IP processing to the adapter to improve performance.

- Converged network adapter (CNA). A CNA combines the functionality of an FC HBA and TCP/IP Ethernet NIC and supports local area network and FC SAN traffic.

- Host channel adapter (HCA). An HCA, also known as an InfiniBand adapter, enables low-latency data communication between servers and storage over lossless InfiniBand networks; it is also used as a server-to-server interconnect when servers are used for both application hosting and storage. Use cases include high-performance computing, data analytics, cloud data centers, and large-scale web and trading applications.

- Remote Direct Memory Access over Converged Ethernet (RoCE) NIC. A RoCE NIC, also known as NIC with RoCE, facilitates data transfer directly between the application memory of different servers -- without CPU involvement -- to accelerate performance on lossless Ethernet networks. It supports faster data transfers than an Ethernet NIC and is typically used for high-volume transactional applications, as well as storage and content delivery networks.