service-level agreement (SLA)

What is a service-level agreement (SLA)?

A service-level agreement (SLA) is a contract between a service provider and its customers that documents what services the provider will furnish and defines the service standards the provider is obligated to meet.

A service-level commitment (SLC) is a broader and more generalized form of an SLA. The two differ because an SLA is bidirectional and involves two teams. In contrast, an SLC is a single-directional obligation that establishes what a provider can guarantee its customers at any given time.

Why are SLAs important?

Service providers of all kinds, such as network service providers, cloud service providers and managed service providers (MSPs), need SLAs to help them manage customer expectations. SLAs also define the circumstances under which they are or aren't liable for outages or performance issues. Customers can also benefit from SLAs because the contract describes the performance characteristics of the service -- which can be compared with other vendors' SLAs -- and sets forth the means for redressing service issues.

The SLA is typically one of two foundational agreements that service providers have with their customers. Many service providers establish a master service agreement to establish the general terms and conditions in which they will work with customers.

The SLA is often incorporated by reference in the service provider's master service agreement. Between the two service contracts, the SLA adds greater specificity regarding the services provided and the metrics that will be used to measure their performance. Service commitments define the services that are included with the service offering.

SLAs originally defined the levels of support that software, hardware and networking companies would provide to customers running technologies in on-premise data centers and offices.

When IT outsourcing emerged in the late 1980s, SLAs evolved as a mechanism to govern such relationships. Service-level agreements set the expectations for a service provider's performance and established penalties for missing the targets and, in some cases, bonuses for exceeding them. Because outsourcing projects were frequently customized for a particular customer, outsourcing SLAs were often drafted to govern a specific project.

As managed services and cloud computing services continue to be widely used, SLAs have evolved to address the new approaches. Shared services, rather than customized resources, characterize the newer contracting methods. Service-level commitments are frequently used to produce broad agreements intended to cover all a service provider's customers.

Who needs a service-level agreement?

SLAs are thought to have originated with network service providers but are now widely used in a range of IT-related fields. Some examples of industries that establish SLAs include IT service providers and MSPs as well as cloud computing and internet service providers (ISPs).

Corporate IT organizations, particularly those who have embraced IT service management, enter SLAs with their in-house customers -- users in other departments within the enterprise. An IT department creates an SLA so its services can be measured, justified and compared with those of outsourcing vendors.

Key components of an SLA

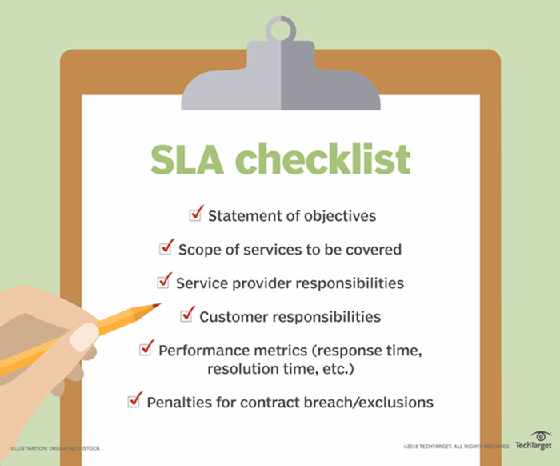

Key components of a service-level agreement include the following:

- Agreement overview. This first section sets forth the basics of the agreement, including the parties involved, the start date and a general introduction of the services provided.

- Description of services. The SLA needs detailed descriptions of every service offered, under all possible circumstances, with the turnaround times included. Service definitions should include how the services are delivered, whether maintenance service is offered, the hours of operation, the locations of dependencies, an outline of the processes, and a list of all technologies and applications used.

- Exclusions. Specific services that are not offered should also be clearly defined to avoid confusion and eliminate room for assumptions.

- Service performance. Performance measurement metrics and performance levels are defined. The client and service provider should agree on a list of all the metrics they will use to measure the service levels of the provider.

- Redressing. Compensation or payment to the customer should be defined if a provider cannot properly fulfill its SLA obligations.

- Stakeholders. Clear definitions of the parties involved in the agreement and their responsibilities.

- Security. All security measures that will be taken by the service provider are defined. Typically, this includes the drafting and consensus on IT security and nondisclosure agreements.

- Risk management and disaster recovery. Risk management processes and a disaster recovery plan are established and clearly communicated between the parties.

- Service tracking and reporting. This section defines the reporting structure, tracking intervals and stakeholders involved in the agreement.

- Periodic review and change processes. The SLA and all established key performance indicators (KPIs) should be regularly reviewed. The review process is defined as is the appropriate process for making changes.

- Termination process. The SLA should define the circumstances under which the agreement can be terminated or will expire. A notice period from either side should also be established.

- Signatures. Finally, all stakeholders and authorized participants from both parties must sign the document to show their approval of every detail and process.

When developing an SLA, end users can use a template to simplify the process. Vendors will probably have their own SLA formats. Users must identify their business needs; customer experience expectations; and performance metrics such as defect rates, network latency and service-level indicators. Templates can provide placeholders for items including specific deliverables, functionality to be provided, type of service to be delivered, quality of service and response to disruptions,

What are the three types of SLAs?

There are three basic types of SLAs: customer, internal and multilevel service-level agreements.

A customer service-level agreement is between a service provider and its external or internal customers. It is sometimes called an external service agreement.

In a customer-based SLA, the customer and service provider come to a negotiated agreement on the services that will be provided. For example, a company may negotiate with the IT service provider that manages its accounts payable and receivable systems to define their specific relationship and expectations in detail.

A customer service-level agreement includes the following:

- Exact details of the service expected by the customer.

- Provisions of the service availability.

- Standards for each level of service.

- Each party's responsibilities.

- Escalation procedures.

- Penalties for failure to achieve SLA metrics.

- Terms for cancellation.

An internal SLA is between an organization, such as an IT department, and its internal customer, such as another organization, department or site.

That means that a company could have an SLA open with each of its customers, and it might also have a separate SLA between its marketing and sales departments defining the operational performance that is expected.

For example, a company's sales department might have nearly $10,000 worth of sales every month, with each sale worth $500. If the sales team's average closing rate is 20%, then sales knows that marketing must deliver at least 100 qualified leads every month.

Based on the SLA terms, the head of the organization's marketing department works with the head of sales on an SLA that stipulates that marketing will deliver 100 qualified leads to sales by a specific date every month.

This service-level agreement could stipulate that it will include four weekly status reports every month sent from marketing to sales to ensure the leads the sales team is getting are enabling them to hit their monthly sales goal.

A multilevel SLA will divide the agreement into various levels that are specific to a series of customers using the service. For example, a software as a service provider might offer basic services and support to all customers using a product, but they could also offer different price ranges that dictate different service levels. These different levels of service will be layered into the multilevel SLA.

Service-level agreement examples

One specific example of an SLA is a data center SLA. This can include the following:

- An uptime guarantee that indicates the percentage of time a specific system or network service is available. Nothing less than a 99.99% uptime should be considered acceptable for modern, enterprise-level data centers.

- A definition of proper environmental conditions. This should include oversight and maintenance practices as well as compliance with HVAC -- heating, ventilation and air conditioning -- standards.

- The promise of technical support. Customers must be confident that data center staff will respond quickly and effectively to any problem and be available at any time to address it.

- Detailed security precautions that will keep the customer's information assets secure. This could include cybersecurity measures that protect against cyberattacks as well as physical security measures that restrict data center access to authorized personnel. Physical security features could include two-factor authentication, gated entries, cameras and biometric authentication.

Another specific example is an ISP SLA. This will include an uptime guarantee, but it will also define packet delivery expectations and latency. Packet delivery refers to the percentage of data packets that are received compared to the total number of data packets sent. Latency is the amount of time it takes a packet to travel between clients and servers.

How to validate SLA levels

Verifying the provider's service delivery levels is necessary to the enforcement of a service-level agreement. If the SLA is not being properly fulfilled, then the client may be able to claim the compensation agreed upon in the contract.

Service providers often make their service-level statistics available through an online portal. This allows customers to track whether the proper service level is being maintained. If they find it is not, the portal also allows clients to see if they are eligible for compensation. This is an important deciding factor when selecting a vendor.

These systems and processes are frequently controlled by specialized third-party companies. If this is the case, then it is necessary for the third party also to be included in the SLA negotiations. This will provide them with clarity about the service levels that should be tracked and explanations of how to track them.

Tools that automate the capturing and displaying of service-level performance data are also available.

SLAs and indemnification clauses

An indemnification is a contractual obligation made by one party -- the indemnitor -- to redress the damages, losses and liabilities experienced by another party -- the indemnitee -- or by a third party. Within an SLA, an indemnification clause will require the service provider to acknowledge that the customer is not responsible for any costs incurred through violations of contract warranties. The indemnification clause may also require the service provider to pay the customer for any litigation costs from third parties that resulted from the contract breach.

To limit the scope of indemnifications, a service provider can do the following:

- Consult an attorney.

- Limit the number of indemnitees.

- Establish monetary caps for the clause.

- Create time limits.

- Define the point at which the responsibility for indemnification starts.

SLA performance metrics

SLAs include metrics to measure the service provider's performance. Because it can be challenging to select metrics that are fair to the customer and the service provider, it's important that the metrics are within the control and expertise of the service provider. If the service provider is unable to control whether a metric performs as specified, it is not fair to hold the vendor accountable for that metric. This should be discussed up front among the parties before executing the SLA.

It is essential that data supporting the metrics is accurately collected, and an automated process might be an important solution. In addition, the SLA should specify a reasonable baseline for the metrics, which can be refined when more data is available on each metric.

SLAs establish customer expectations regarding the service provider's performance and quality in several ways. Some metrics that SLAs may specify include the following:

- Availability and uptime percentage. This metric quantifies the amount of time services are running and accessible to the customer. Uptime is generally tracked and reported per calendar month or billing cycle.

- Specific performance benchmarks. Actual performance can be periodically compared to accepted benchmarks.

- Service provider response time. This is the time it takes the service provider to respond to a customer's issue or request. A larger service provider may operate a service desk to respond to customer inquiries.

- Resolution time. This is the time it takes for an issue to be resolved once logged by the service provider.

- Abandonment rate. The percentage of queued calls customers abandon while waiting for answers.

- Business results. This metric uses agreed KPIs to determine how service providers' contributions affect the performance of the business.

- Error rate. The percentage of errors in a service, such as coding errors and missed deadlines.

- First-call resolution. The percentage of incoming customer calls that are resolved without the need for a callback from the help desk.

- Mean time to recovery. The time needed to recover after a service outage.

- Mean time to repair. Mean time to repair is the time needed to fix something that has been reported as inoperable.

- Security. This addresses several metrics, such as the number of undisclosed vulnerabilities. If an incident occurs, service providers should be able to demonstrate that they've taken preventive measures.

- Time service factor. The percentage of queued calls customer service representatives answer within a given time frame.

- Turnaround time. The time it takes for a service provider to resolve a specific issue once it has been received.

Another metric might be delivery of a schedule for notification in advance of network changes that may affect users and general service usage statistics.

An SLA may specify availability, performance and other parameters for different types of customer infrastructure, including internal networks; servers; and infrastructure components, such as uninterruptable power supplies.

What happens if agreed-upon service levels are not met?

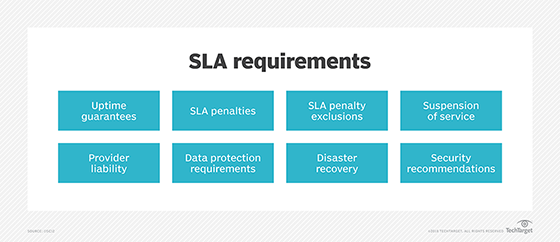

SLAs include agreed-upon penalties in the event a service provider fails to meet the agreed-upon service levels. These remedies could be fee reductions or service credits against the fees incurred by the customer as well as termination of the contract for repeated failures.

Customers can enforce these service credits when service providers miss agreed-upon performance standards. Typically, the customer and the service provider agree to put a certain percentage of the monthly fees at risk. The service credits are taken from those at-risk fees when the vendor misses the SLAs.

The SLA should detail how the service credits will be calculated. For example, the customer and the vendor could develop a formula that provides service credits based on the amount of downtime that exceeds the terms of the SLA. A service provider may cap performance penalties at a maximum dollar amount to limit its exposure.

The SLA will also include a section detailing exclusions, which are situations in which an SLA's guarantees and penalties for failing to meet them do not apply. The list might include events such as natural disasters or terrorist acts. This section is sometimes referred to as a force majeure clause, which aims to excuse the service provider from events beyond its reasonable control.

Service-level agreement penalties

SLA penalties are disciplinary measures that exist to ensure the terms of the contract are maintained. These penalties differ from contract to contract. They may include the following:

- Service availability. This includes factors such as network uptime, data center resources and database availability. Penalties should be added as deterrents against service downtime, which could negatively affect the business.

- Service quality. This involves performance guarantees, the number of errors allowed in a product or service, or processing of gaps and other issues that pertain to quality.

In addition to service credits, there could be the following:

- Financial penalties. These require the vendor to reimburse the customer the amount of damages agreed upon in the contract.

- License extension or support. This requires the vendor to extend the term of the license or offer the customer additional support without charge. This could include development and maintenance.

These penalties must be specified in the language of the SLA, or they cannot be enforced. In addition, some customers might not think the service credit or license extension penalties are adequate compensation. As such, they may question the value of continuing to receive the services of a vendor that is unable to meet its quality levels.

Consequently, it might be worthwhile to consider a combination of penalties as well as include an incentive, such as a monetary bonus, for work that is more than satisfactory.

Considerations for SLA metrics

When choosing which performance metrics to include in the SLA, a company should consider the following factors:

- The measurements should motivate the right behavior. When defining the metrics, both parties should remember that the metrics' goal is to motivate the appropriate behavior on behalf of the service provider and the customer.

- The metrics should only reflect factors that are within the service provider's reasonable control. Data supporting the measurements should also be easy to collect. Furthermore, both parties should resist choosing excessive amounts of metrics or measurements that produce large amounts of data. Conversely, including too few metrics can also be a problem, as missing one could make it look like the contract has been breached.

For the established metrics to be useful, a proper baseline must be established with the measurements set to reasonable and attainable performance levels. This baseline will likely be redefined throughout the parties' involvement in the agreement, using the processes specified in the periodic review and change section of the SLA.

What are SLA earn back provisions?

An earn back is a provision that may be included in the SLA that allows providers to regain service-level credits if they perform at or above the standard service level for a certain amount of time. Earn backs are a response to the standardization and popularity of service-level credits.

Service-level credits, also known as service credits, should be the sole and exclusive remedy available to customers to compensate for service-level failures. A service credit deducts an amount of money from the total amount to be paid under the contract if the service provider fails to meet service delivery and performance standards.

If both parties agree to include earn back provisions in the SLA, then the process should be defined carefully at the beginning of the negotiation and integrated into the service-level methodology.

When to revise an SLA

A service-level agreement is a living document and should be updated and reviewed regularly with new information. Most companies revise their SLAs either annually or bi-annually. However, the faster an organization grows, the more often it should review and revise its SLAs.

Knowing when to make changes in an SLA is a key part of managing the client/service provider relationship. Understanding when it is not appropriate to make SLA changes is also important. The two parties should meet on a set schedule to revisit their SLA and ensure it continues to meet the requirements of both parties.

An SLA should be revised if one or more the following conditions have been met:

- The customer's business requirements change -- for example, its availability requirements increase because it has established an e-commerce website.

- A workload change develops.

- Measurement tools, processes and metrics improve.

- The service provider stops offering an existing service or adds a new service.

- The service provider's technical capabilities change -- for example, new technology or more reliable equipment helps the vendor provide faster response times.

Service providers should review their SLAs every 18 to 24 months even if their capabilities or services haven't significantly changed.

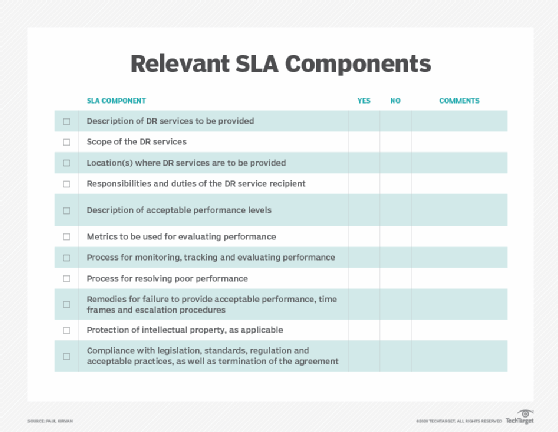

Learn more about the importance of SLA compliance in IT and all about five-nines availability and what it means. Download our free service-level agreement template to get started planning the requirements associated with your organization's DR activities.