data retention policy

What is a data retention policy?

A data retention policy, or records retention policy, is an organization's established protocol for retaining information for operational or regulatory compliance needs. In business settings, data retention is a concept that encompasses all processes for storing and preserving data as well as the specific time periods and policies businesses enforce that determine how and for how long data should be retained.

When writing a data retention policy, organizations must determine how to organize information so it can be searched and accessed later and dispose of information that's no longer needed.

Some organizations find it helpful to use a data retention policy template that provides a framework to follow when crafting the policy.

A comprehensive data retention policy outlines the business reasons for retaining specific data and what to do with it when targeted for disposal.

What are the goals of data retention?

The goal of data retention for these businesses is to allocate enough time to extract needed value from data while keeping data privacy and security considerations in mind. While businesses can draft their own requirements for data retention, there are also legal considerations as well that depend on factors such as geography. Other reasons a business would prioritize data retention could include the need for future data analyses.

Whatever the reason, it's imperative that businesses properly manage their data for their own benefit and for compliance requirements or for adhering to government regulations. Since businesses operate on many kinds of data and the usefulness of certain data can wane over time, management and retention of such data can get complicated. That is why data retention policies are necessary for handling all of this.

Why is a data retention policy important?

A data retention policy is part of an organization's overall data management strategy. A policy is important because data can pile up dramatically, so it's crucial to define how long an organization must hold on to specific data.

An organization should only retain data for as long as it's needed, whether that's six months or six years. Retaining data longer than necessary takes up unnecessary storage space and costs more than needed.

What are the benefits of a data retention policy?

There are numerous benefits to establishing a solid data retention policy:

- Automated compliance. With an established policy, organizations can ensure they comply with regulatory requirements mandating the retention of various types of data.

- Reduced likelihood of compliance-related fines. Even if an organization retains all the data that's legally required, the organization must produce that data if it's requested by auditors. Retaining only the minimally required volume of data makes it easier and less time-consuming to locate this data, thereby reducing the chances that an organization is fined for its inability to produce data that's required to be retained.

- Reduced storage costs. There's a direct cost associated with data storage and reducing the volume of data that is being stored also reduces storage costs.

- Increased relevancy of existing data. Data becomes less relevant as it ages, and a data retention policy removes irrelevant data that's no longer needed.

- Reduced legal exposure. Once data is no longer needed, it's removed, eliminating the possibility that the data can be surfaced during legal discovery and used against the organization.

What are data retention policy best practices?

When it comes to creating a data retention policy, every organization's needs are different. Even so, there are several best practices that organizations should adhere to when establishing a data retention policy:

- Identifying legal requirements. Organizations must determine the laws and regulations that govern their data retention requirements so those requirements can be incorporated into the data retention policy.

- Identifying business requirements. Creating an effective data retention policy involves more than just complying with applicable regulations. The retention policy must also take the organization's business requirements into account. It could be that there are operational requirements that mandate retaining data for longer than what's legally required.

- Considering data types when crafting a data retention policy. In any organization some data is more valuable than other data. An organization should avoid creating a blanket data retention policy that applies to all types of data. Instead, the policy should specifically define the type of data that must be retained and establish retention requirements for each type.

- Adopting a good data archiving system. If regulatory requirements require certain types of data to be retained for longer than the data is needed by the business, then consider adopting a data archiving system. A data archival system can help reduce the cost of storing archival data, while automating data lifecycle management (DLM) and giving you the tools to locate data in the archives.

- Having a plan for legal hold. If the organization is involved in litigation, then it will likely need to pause the DLM process so the data that was subpoenaed won't be automatically deleted once it reaches the end of its retention period.

- Creating two versions of your data retention policy. If an organization is subject to regulatory compliance, it will likely have to document its data retention requirements to satisfy regulatory mandates. This is a formally written document that can be filled with legal jargon. As a best practice, consider drafting a simpler version of the document that can be used internally as a way of helping stakeholders in the organization better understand retention requirements.

How do you create a data retention policy?

Creating a data retention policy is rarely a simple process and some organizations might find it better to outsource the policy creation and implementation process rather than doing it internally. For organizations creating their own data retention policies, there are 10 basic steps:

- Decide who'll be responsible for creating the policy. This task won't usually be handled by a single person in the organization because it requires expertise in various areas. Typically, the data retention policy creation process is a team effort with members of the IT staff, the organization's legal department and other key stakeholders.

- Determine the organization's legal requirements. The policy must meet or exceed the requirements outlined in any regulations that apply to the organization. Identify the legal requirements upfront, as they'll be the foundation of the policy.

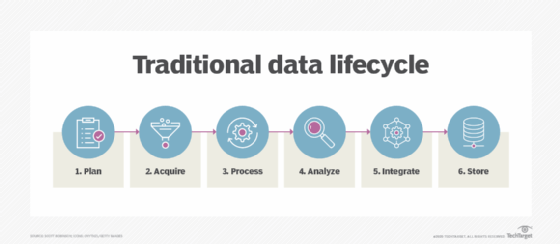

- Define the organization's business requirements. This means identifying various types of data and figuring out how long each data type should be retained. Typically, data is active for a period, then moved to archival storage and eventually purged from the archive as a part of the organization's DLM process.

- Determine who'll be responsible for ensuring that data retention is being performed according to the policy.

- Determine how to perform internal audits to ensure compliance.

- Decide the frequency with which the data retention policy should be reviewed and revised.

- Work with the organization's HR or legal departments to establish a means of enforcing the policy.

- Determine how the data retention requirements are implemented and enforced at a software level.

- Write the official data retention policy.

- Once the policy has been drafted, present the policy to key stakeholders for approval.

Proper implementation of a data retention policy

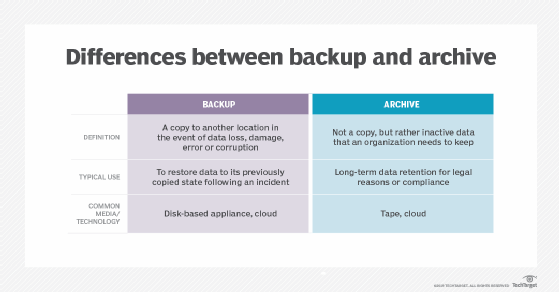

The operational reason for implementing a data retention policy involves proper data backup. An organization's backup data helps it recover in the event of data loss. A policy is important to make sure the organization has the right data and the right amount of data backed up. Too little data backed up means the recovery won't be as comprehensive as needed, while too much causes confusion.

A data retention policy should treat archived data differently from backup data. Archived data is no longer actively used by the organization but still needed for long-term retention. An organization might need data shifted to archives for future reference or for compliance. Archives are stored on cheaper storage media, so they reduce costs and the volume of primary data storage. A user should be able to search archives easily.

For proper creation and implementation of a data retention policy, especially regarding compliance, the IT team should work with the legal team. The legal team will have a better idea of how long data must be retained by law, while IT is responsible for the actual implementation of the policy.

Be careful with the data retention policy. Just because a file was created decades ago doesn't mean it should be automatically deleted after a certain time. That old file could be an important contract the organization must retain, or it could contain other valuable information.

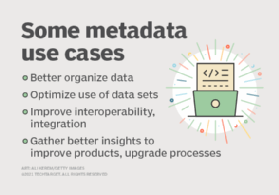

A storage system can retain or remove data based on rules set up by IT. The use of metadata is one way to figure out when a data object is scheduled for deletion or designated to a given storage location. Automated software moves old data to archives, which is especially helpful for organizations with large data volumes. Some software can automatically delete data based on age, outlined in a retention schedule. But administrators must be certain that deleted data serves no further purpose.

Regulatory compliance and data retention

A data retention policy must consider the value of data over time and the data retention laws an organization might be subject to. In 2006, the U.S. Supreme Court recognized that it isn't financially possible to retain all information indefinitely. However, organizations must demonstrate that they only delete data that isn't subject to specific regulatory requirements as well as use a repeatable and predictable process to do so. This means various types of information are held for different lengths of time. For example, a hospital's retention period for employee email would be different than that of its patient records.

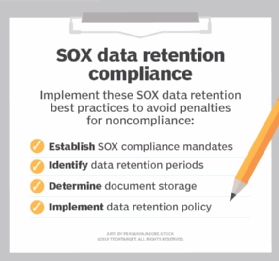

Although it's common for an organization to establish its own data retention requirements, certain data retention laws must be adhered to. This is especially true for organizations operating in regulated industries. For example, publicly traded companies in the U.S. must establish a data retention policy that is compliant with the Sarbanes-Oxley Act (SOX) of 2002. This legislation was passed to restore public confidence in the financial sector after financial reporting scandals, such as that involving Enron Corporation, and to prevent fraud. SOX is comprehensive and has many implications, but one important implication to remember is that it mandates businesses must retain financial reports for at least seven years, then dispose of them once they aren't needed anymore.

Similarly, healthcare organizations are subject to Health Insurance Portability and Accountability Act (HIPAA) data retention requirements and organizations that accept credit cards must adhere to a Payment Card Industry Data Security Standard data retention and disposal policy. For example, healthcare providers are required by HIPAA to retain patient data for at least six years on file, and certain companies might keep patient data for even longer.

Simply retaining data isn't enough. Federal laws commonly require organizations in regulated industries to create a documented data retention policy.

An organization must also consider the General Data Protection Regulation (GDPR), which went into effect in May 2018 and updated data protection laws across the European Union (EU). Mandates apply to personal data produced by EU citizens, whether the company collecting the data is in the EU, as well as any people and organizations whose data is stored in the EU. It's critical to have a data retention policy that explains which data is being held, why and where it's being held and for how long, as it relates to GDPR directives. Especially with a sweeping compliance regulation such as GDPR, only keep the personal information that's needed.

The most comprehensive framework encompassing data retention policies in the U.S. is the California Consumer Privacy Act. The legislation was signed into law in 2018 and went into effect beginning in 2020. It was followed by a subsequent amendment to the law named the California Privacy Rights Act, effective in 2023, which states that businesses must not retain data for longer than needed to complete certain objectives. There is currently no fixed maximum retention period for how long businesses can retain data once it is no longer needed, but the legislation is meant to encourage ethical data use and storage.

Data retention policy examples

Length of time in a data retention policy ranges from minutes to years. Use a policy engine that involves many different fields, such as user, department, folder and file type.

A data retention policy should include email messages. Emails pile up quickly, and some take up a lot of space, so set a reasonable timetable for retention. As with the data retention policy, the IT team should work with legal on email retention schedule details.

Regarding targets, object storage is a popular choice in a data retention policy, as it provides solid data protection at a moderate cost.

Public cloud storage is another common location for data that requires long-term retention. It's typically cheaper than on-premises storage, especially in infrequent access tiers. Cloud service providers offer off-site data protection, which is important in the event of a disruption to the organization's main data center. Speed of restore depends on the tier and size of the data set.

In addition, tape continues to play a key role in long-term data retention. Infrequently accessed historical data finds a good home on tape, where it takes longer to restore than other formats. Storing data on tape for years is typically cheaper than storing it in the cloud and uses less energy than disk storage. Like the public cloud, tape also provides off-site storage.

What are some common data retention policy issues?

Data continues to increase dramatically, not only in primary storage but in backup data and archives as well. Backup takes a particularly burdensome toll when the same data gets backed up. A data retention policy is one way to reduce volume and eventually automate the process of retaining data sets.

However, creating a data retention policy is complex. Setting a data retention schedule isn't cut and dried. Certain data sets require retention of different lengths of time for legal and operational reasons. Organization will ultimately develop their own retention policies to fit their needs. But they must be careful when doing so, especially when instituting an automated form of data retention.

Storage can be a burden as well. That's why a good data retention policy is clear about the type of storage where retained data goes to optimize budget and space.

Proper data disposal

When a protected record's age exceeds that of the applicable data retention policy, the record must be disposed of properly. Organizations aren't required by law to dispose of old data, but it's often in their best interest to do so.

Many organizations use an automated system, typically a dedicated archive software product, to securely delete data that no longer falls within the required data retention period. Automation ensures data is disposed of in the proper time frame without manual intervention. Some organizations might use their backup software's archiving functionality to automate data disposal.

Data retention is a crucial aspect of data governance. While data retention can be beneficial and often mandatory, businesses should also be aware of the top benefits of enacting a comprehensive data governance strategy.