Speeds of storage networking technologies rise as flash use spikes

InfiniBand networking technology first gained adoption in high-performance computing environments thanks to its ability to deliver data with low latency and low CPU overhead. It uses Remote Direct Memory Access (RDMA) technology to move data from application buffers directly to the network adapter without involving the processor or caching. By avoiding use of the host operating system, RDMA is able to reduce much of the typical latency and CPU overhead involved in data transfers.

InfiniBand's ability to connect large clusters of compute nodes with low latency, while providing quality of service and failover, has expanded its use cases beyond the initial high-performance computing (HPC) sweet spot. InfiniBand networking technology is also becoming a connectivity consideration for databases and enterprise resource planning systems, big data environments, demanding web applications and highly virtualized environments.

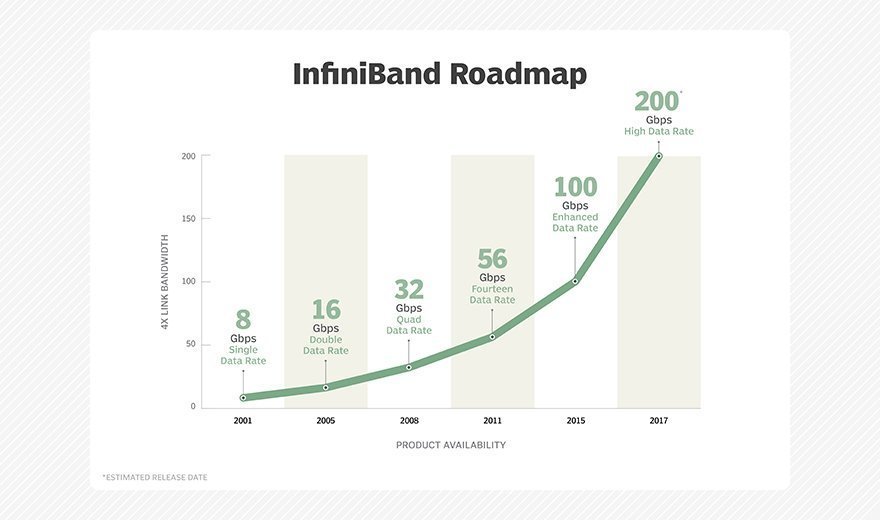

InfiniBand is typically implemented as four parallel lanes. Products first came to market in 2001 at a four-lane speed of 8 Gbps. In 2016, the most deployed InfiniBand networking technology was 56 Gbps, or Fourteen Data Rate (FDR), over four lanes. Users also had the four-lane option of 100 Gbps, or Enhanced Data Rate (EDR).

InfiniBand networking can be deployed across 12 lanes to provide greater bandwidth, such as 168 Gbps (FDR) and 300 Gbps (EDR), but 12-lane use is uncommon.

According to the InfiniBand Trade Association (IBTA), development groups are working to provide faster InfiniBand networking speeds to enable the next generation of high-performance compute and storage platforms and to facilitate the increasing use of applications such as big data analytics, virtualization and machine learning.

High Data Rate (HDR), 200 Gbps implemented over four lanes, is due to ship in 2017. The IBTA roadmap also lists two upcoming data rates, christened Next Data Rate (NDR) and Extreme Data Rate (XDR), but the association has yet to release details on the bandwidth and expected release dates. InfiniBand networking vendors have said they expect NDR to deliver 100 Gbps per lane, or 400 Gbps over four lanes, in the 2019 to 2020 timeframe, and XDR could provide another doubling of the speed.