clustered network-attached storage (NAS) system

What is a clustered network-attached storage (NAS) system?

A clustered network-attached storage (NAS) system is a scale-out storage platform made up of multiple NAS nodes networked together into a single cluster. The platform pools the storage resources within the cluster and presents them as a unified file system. To achieve this, the platform uses a distributed file system that runs concurrently on every NAS node in the cluster. The file system enables each node to access all files from any of the clustered nodes, regardless of the physical location of the file.

The number and location of the nodes are transparent to the users and applications accessing files. Today's clustered NAS systems can be scaled out to include numerous nodes and provide petabytes (PB) of raw capacity. For example, the latest NetApp Fabric-Attached Storage storage arrays -- FAS8700/FAS9000 series -- can support up to 24 nodes in a cluster and up to 176 PB of raw capacity, in either a 4U or 8U controller chassis form factor.

Clustered NAS systems typically provide transparent replication and fault tolerance. If one or more nodes fail, the system continues to function without data loss. In addition, data and metadata can be striped across both the storage nodes and the underpinning block storage subsystems -- direct-attached storage (DAS) or storage area network (SAN).

There is no fixed configuration for NAS clusters, with each vendor taking a different approach to how the controllers and storage devices are deployed. However, they all rely on a distributed file system to present the data on an operating system (OS) to manage the volumes and protect the data. The file system and OS work hand in hand to deliver unified file services. In some cases, the file and operating systems are combined into a single software layer, such as Dell EMC's OneFS OS.

This article is part of

What is network-attached storage (NAS)? A complete guide

Although clustering appears similar to file virtualization, the key difference is that, with a few exceptions, the system nodes must be from the same vendor and part of the same product line from that vendor. For example, an organization can create a cluster made up of Dell's PowerStore T appliances or PowerStore X appliances, but a single cluster cannot contain both types of Dell appliances. However, administrators can typically configure a cluster's nodes with different types of storage devices or with different capacities, unless two nodes are set up in a failover configuration.

What are the main benefits of clustered NAS storage?

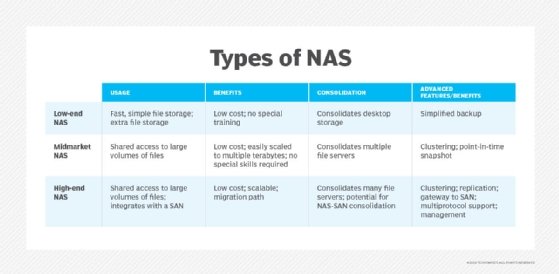

Traditional NAS systems have served organizations well over the years, but the rapid growth of data -- especially unstructured data -- has forced decision-makers to look for more effective ways to provide storage that can meet the demands of rapidly evolving workloads.

Clustered NAS provides a scale-out storage solution that can deliver the performance and capacity needed to support many of these workloads, including those that require a high degree of concurrency. A clustered NAS system uses load balancing to spread the data across storage nodes and present those nodes as a single repository that contains all the data, helping to simplify user access and application delivery.

A clustered NAS system also offers more flexibility than traditional NAS. If an organization needs more compute or storage resources, administrators can add nodes to the cluster or beef up the individual nodes, often with the ability to scale compute (performance) and storage (capacity) resources independently. They can also remove components from the cluster as workload requirements change. Most contemporary scale-out NAS systems include built-in mechanisms that make it quick and easy to modify the cluster infrastructure and configuration.

A cluster's nodes can also be set up in a failover configuration that protects against data loss and extended downtime in the event of component failure. For example, a six-node cluster might be organized into three sets, with each set including two nodes deployed in an active/passive configuration. If a node fails, it automatically fails over to the passive node within that set.

See how to master clustered storage with Storage Spaces and Scale-Out File Server, a data storage capacity growth survival guide, the top five reasons to choose NAS vs. DAS and how to beat the software bottleneck by improving storage performance.