Networking server-based storage

Direct-attached or server-based storage is gaining renewed attention as emerging techs offer ways to pool and share this scalable storage resource.

Direct-attached or server-based storage is gaining renewed attention as emerging technologies offer easy ways to pool and share this highly scalable storage resource.

In recent years, traditional storage systems have been challenged by alternate approaches to building storage arrays. Those traditional systems typically package storage controllers, disks, interfaces and firmware into a proprietary array whose components and inner workings are esoteric and usually only privy to the array vendor. Because those systems are costly, complex and lack openness, alternatives have emerged that have gained the attention of some storage shops.

A changing computing landscape that increasingly favors mobile and cloud computing has also been an impetus for more open and cost-effective storage platforms to surface.

Among the most promising alternatives to traditional storage array architectures are products that network directly attached server-based storage into a shared storage pool -- storage systems that are also known as networked server-based storage. Being able to leverage commodity x86-based servers and their directly attached disks, and combine that with storage software that runs on standard servers to provide a shared storage pool, enables more open storage platforms at a price point far below that of traditional proprietary systems. Networked server-based storage is offered by a growing list of startups and has been the favorite storage architecture of cloud service providers, but it's now finding its way into the product portfolios of established storage vendors.

Making the case for networked server-based storage

Networked server-based storage is succeeding for several reasons. First and foremost, it's cost-effective. Traditionally, storage arrays have been based on proprietary hardware and controllers optimized for storage processing to cope with the low-latency and high-bandwidth requirements of storage arrays that had to be accessed by a host of systems. Storage vendors have been charging dearly for that approach, establishing a lucrative, high-margin storage hardware business. But as multicore x86-based systems, interfaces and networks became faster and the performance gap compared to proprietary storage hardware narrowed and eventually closed, traditional storage vendors stayed the course, keeping storage hardware proprietary and margins high. It was just a matter of time before networked storage systems that run on standard X86-based servers emerged.

"Networked server-based storage that uses commodity server hardware is less expensive, because you're not paying the vendor markup for [host bus adapters] HBAs, adapters, disks and other components," said Terri McClure, a senior analyst at Milford, Mass.-based Enterprise Strategy Group (ESG).

Networked server-based storage reaps the benefits of virtualization. Decoupling the storage software from the underlying hardware enables the storage stack to run on all types of hardware, including low-cost commodity x86-based systems and their direct-attached storage. By taking advantage of virtualization, networked server-based storage helps increase storage utilization by pooling otherwise underutilized direct-attached server storage into a shared storage resource. Some networked server-based storage products combine storage and virtual machine (VM) processing on the same server hardware to further increase server resource utilization; this means a more cost-efficient data center that reduces data center hardware, and lowers power consumption and space requirements.

Networked server-based storage is also easier to manage. Storage arrays, especially Fibre Channel systems, are complex to deploy and manage, and require the expertise of storage specialists. Networked server-based storage, on the other hand, can be managed by the server team, which takes fewer IT resources and results in lower operational costs. Ease of management is the other main reason for networked server-based storage having done so well with cloud service providers and consumer-facing Web 2.0 companies.

The many faces of networked server-based storage

Contemporary networked server-based storage products come in a variety of flavors and from different roots, but they have one thing in common: They all use standard server hardware and aggregate directly attached server storage into a single shared storage pool.

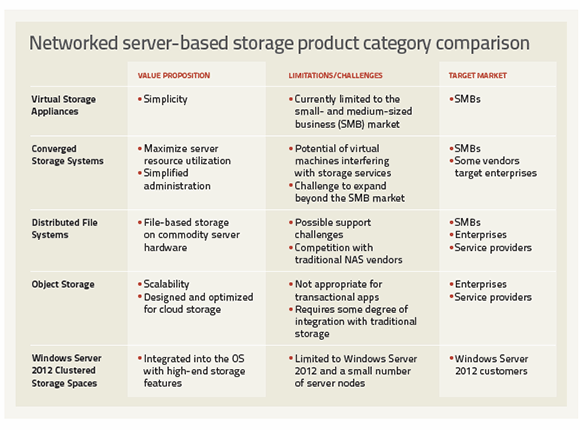

Virtual storage appliances (VSAs) run the storage software within VMs and are distributed as virtual machine images that are deployed to one or multiple physical hosts. They combine the directly attached storage of the hosts they run on into a shared storage pool. The number of hosts supported by currently available VSAs varies by vendor. VMware's vSphere Storage Appliance combines the local storage of up to three hosts into a shared storage resource that's accessed via the Network File System and managed through vCenter. The Hewlett-Packard (HP) StoreVirtual VSA, which is based on the LeftHand OS, eclipses the VMware VSA by pooling the local storage of up to 16 hosts to present it as shared storage that's accessible via iSCSI. NetApp's Data Ontap Edge is a VM that runs Data Ontap, currently supporting only a single server node, but able to seamlessly interact with other NetApp storage.

The current crop of VSAs is well-suited for small environments, such as small- and medium-sized businesses (SMBs) or branch offices of larger companies. Being able to deploy them on existing servers, without the need for additional storage hardware, makes them very cost effective, as well as easy to deploy and support. VSAs clearly have the potential to expand beyond the SMB market and today's limits are mostly imposed by VSA vendors that are either protecting their lucrative high-end storage business -- in the case of NetApp and to some extent HP -- or trying to avoid upsetting the existing ecosystem that could harm adoption, as in the case of VMware. VMware in particular, with its VSA and the potential of VMware Virtual SAN (vSAN), is walking a fine line so it won't agitate storage vendors that have integrated their storage systems with the various VMware APIs.

Converged storage systems. To maximize server resources, a group of startups has emerged that offers products that combine storage and VM processing on the same server hardware. The idea is to dedicate a certain percentage of the computing resources of the underlying hosts to storage tasks and the remaining resources to virtual machines. Besides high server resource utilization, ease of management and low cost are the key benefits of combining storage and VM processing in a single system that usually consists of multiple server nodes. On the downside, combining storage and VMs in a single system can limit the ability to scale and increases the likelihood of VM processing interfering with storage processing and vice versa.

Currently, a handful of vendors offer converged virtualization and storage products. Scale Computing's HC3 runs the open source Red Hat KVM hypervisor on top of its server-based multinode scale-out network-attached storage (NAS) to target the SMB market. Nutanix runs its storage stack as an additional service parallel to the VMs, virtualizing storage from physical server nodes into a unified pool of scale-out converged storage, targeting both SMBs and enterprises. The SimpliVity OmniCube scale-out NAS, with features like real-time deduplication and compression, can also host VMs and is intended for both SMBs and enterprises. By adding virtual machine processing, converged storage systems literally take the value proposition of networked server-based storage to a new level.

Distributed file systems. Even though the NAS market is dominated by large storage vendors, open source distributed file systems have enabled others to build NAS systems that run on commodity x86-based servers. A case in point is Red Hat Storage Server, which is based on the GlusterFS scale-out NAS file system Red Hat obtained with its acquisition of Gluster in 2011. Another example is the Oracle Zeta File System (ZFS), which was originally developed by Sun and is used in Oracle's ZFS Storage 7000 series; ZFS is open source software that has found its way into products from vendors such as Nexenta, which markets its ZFS product as a software-defined storage offering.

"Nexenta uses ZFS and puts management software [around it] to sell it to [users to] create NAS storage or NAS gateways," said Greg Schulz, founder and senior analyst at Stillwater, Minn.-based StorageIO. Similarly, the Apache Hadoop Distributed File System runs on commodity servers, and it supports anywhere from a few to thousands of server nodes to create large shared-storage pools.

Networked server-based storage products based on a distributed file system can be a cost-effective storage option for large amounts of file-based content. The challenges of foregoing a more established NAS product for a more novel approach is offset by significant cost savings and product-specific features and behaviors that may not be available on a traditional NAS system.

Object storage. Object storage is in many ways similar to scale-out NAS, but instead of files it manages objects with unique identifiers. Objects support rich metadata far beyond the file-system attributes of NAS. Object storage is usually accessed through HTTP APIs such as REST, and data redundancy is achieved by storing objects multiple times on different nodes.

To meet the requirements of the cloud storage market, object storage is designed to run distributed on multiple server nodes, and it scales by simply adding more server nodes. In other words, object storage is designed as networked server-based storage. Object storage is best suited for storing file-based content for both internal and external applications. It's unfit for transaction-based systems, such as databases and transaction processing applications. While many Web 2.0 companies created their own proprietary object stores, object storage products, such as Caringo CAStor, EMC Atmos, Hitachi Content Platform and NetApp StorageGRID among others, have been available for several years.

Windows Server 2012 Clustered Storage Spaces. With Windows Storage Spaces (WSS), Server Message Block 3.0 enhancements, data deduplication and thin provisioning support, Windows Server 2012 has all the ingredients for building high-end, networked server-based storage systems. By combining WSS with the failover clustering feature, Windows Server 2012 provides for building clustered storage spaces that consist of multiple server nodes. Clustered Storage Spaces combine a small number of servers, typically two or four, with a set of serial-attached SCSI (SAS) JBOD enclosures connected to all servers. Access is unified into a single namespace via Cluster Shared Volumes that can be accessed by all server nodes, regardless of the number of servers, JBOD enclosures and provisioned virtual disks. A January 2013 ESG lab report concludes that the storage efficiency, agility and transparency of new and improved features make the high-performing, cost-effective Windows Server 2012 a no-brainer for small and large businesses alike.

Trending toward software-defined infrastructure services

The benefits of low-cost, high resource utilization and simplified management bode well for networked server-based storage platforms. The rapid growth of unstructured data and a need to scale seamlessly internally and into the cloud are key drivers that should help networked server-based storage solutions expand the market. From a longer term perspective, virtualization and the move toward software-defined infrastructure and data centers will result in an increased decoupling of infrastructure services from the underlying hardware. Networked server-based storage is just a step toward a future where VMs, networking, storage, security and other infrastructure services run on top of shared physical hardware components.

About the author:

Jacob N. Gsoedl is a freelance writer and a corporate director for business systems. He can be reached at [email protected].